The High Cost of the Generate Button: Why Speed Breaks AI Creative Pipelines

It is 3:00 PM on a Tuesday. A creative team is tasked with producing a series of social assets for a Friday product launch. In the old world, this would involve a photographer, a retoucher, and a two-week lead time. In the current landscape, the team opens an interface, types a prompt, and hits “Generate.” Within ten minutes, they have 200 variations. On paper, they are 100 times more productive than they were three years ago. In reality, they are staring at 200 images that almost—but don’t quite—work.

This is the velocity trap. When teams prioritize raw output speed over granular control, the “efficiency” of generative AI becomes a mirage. The time saved in the creation phase is often lost in the curation and correction phases. For professional workflows, the bottleneck is no longer how fast an image can be rendered, but how much human intervention is required to make that image usable for a brand.

The Velocity Trap: When 1,000 Assets Equal Zero Utility

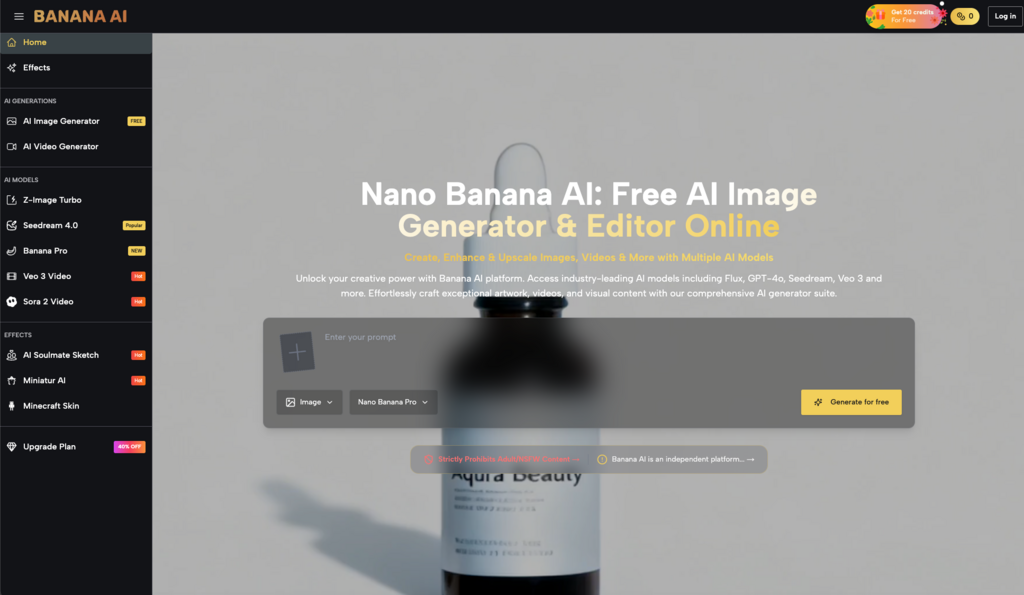

The most common mistake teams make when integrating tools like Banana AI Image into their workflow is treating the platform like a lottery. There is a psychological rush that comes with seeing a high-speed model return four variations in seconds. This encourages a “re-roll” culture: if the first set of images isn’t perfect, the user simply hits the button again.

While this works for personal experimentation or mood boarding, it is a catastrophic strategy for commercial production. Every “re-roll” adds to the curation debt. A creative lead who has to sort through 500 images to find one that doesn’t have a structural defect is not working efficiently; they are performing high-volume data entry.

Speed without control leads to “visual noise.” When you flood a Slack channel with variations instead of refining a single, controlled vision, you dilute the creative intent. The lottery approach relies on luck, whereas a professional pipeline must rely on predictability. We cannot safely assume that generating more assets increases the probability of finding the “perfect” one; often, it just increases the probability of settling for “good enough” because the team is too exhausted to keep looking.

Model Indifference: The Error of Using Turbo for Everything

A significant technical error occurs when operators fail to match the specific model to the creative objective. Within the Banana AI ecosystem, there is a temptation to default to the fastest option available, such as Z-Image Turbo, for every task. While Turbo models are exceptional for rapid prototyping and conceptual sketching, they often lack the compositional depth or anatomical precision required for final-mile assets.

Consider a campaign requiring a high-fidelity architectural interior with specific lighting requirements. A team optimized for speed might stay on a Turbo model because the iteration loop is five seconds. However, the “fastest” model may struggle with straight lines or the specific physics of light bounce, resulting in an image that requires three hours of manual Photoshop work to fix.

In contrast, switching to a more robust model like Seedream 4.0 or Banana Pro might take 20 seconds longer per generation, but the structural integrity is significantly higher. This is where “operator-style” judgment matters. You have to evaluate the trade-off: do you want 10 images that are 70% correct in one minute, or two images that are 95% correct in the same timeframe? For a professional team, the latter is always cheaper because it reduces the “human-in-the-loop” fix-it time.

Visual Drift and the Seed Control Oversight

One of the hardest lessons for content teams to learn is the concept of “visual drift.” This occurs when a series of assets intended for the same campaign start to lose their aesthetic cohesion. Over a dozen generations, the lighting shifts from warm to neutral, the texture of a character’s clothing changes from linen to cotton, and the brand’s specific color palette begins to bleed into generic AI “neon-saturation.”

This drift is almost always a result of neglecting seed management and parameter control. Every image generated in Banana AI Image is assigned a unique seed—a starting point for the noise that becomes an image. Most novice users leave the seed on “random,” essentially starting a brand-new visual universe with every click.

To maintain control, a workflow must move from “prompt-only” to “parameter-first.” By locking a seed or using image-to-image workflows, creators can keep the underlying structure consistent while iterating on specific details. It’s important to note an industry-wide limitation here: even with perfect seed control, generative models still exhibit a degree of stochasticity. You cannot currently guarantee 100% pixel-perfect consistency across twenty different angles of a complex object. Expecting the tool to behave like a 3D CAD program is a recipe for frustration.

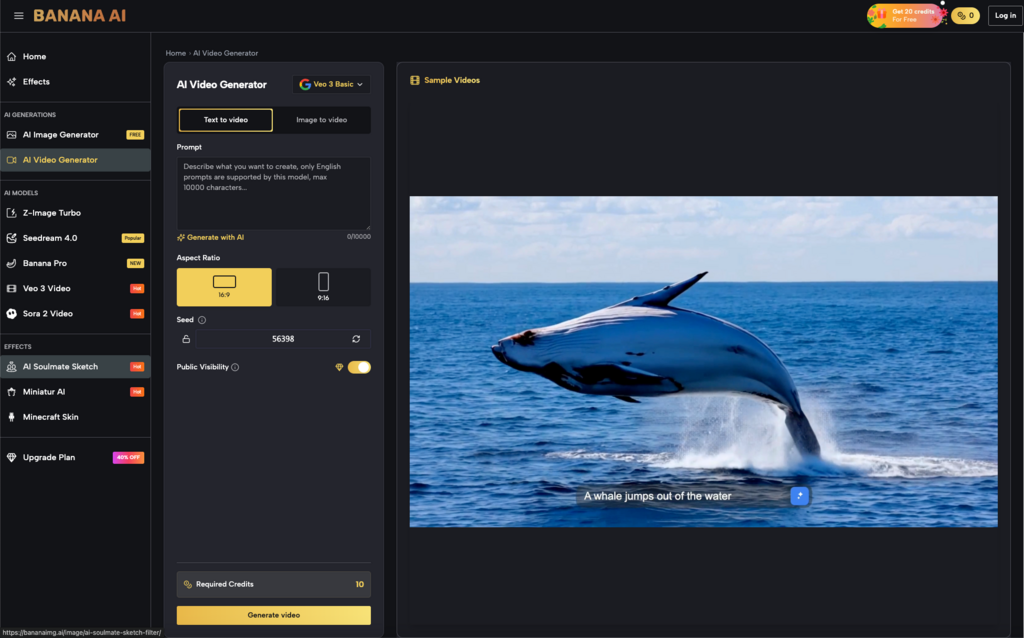

From Still to Motion: Why Fast Video Workflows Often Fail the Edit

As teams move from static images to video, the “speed vs. control” conflict intensifies. Generative video is compute-heavy and time-consuming, leading many teams to take shortcuts. The most common mistake is using pure text-to-video for specific product or brand demonstrations.

When you ask an AI to “show a person opening a laptop” via text, the model has too many degrees of freedom. The laptop might change color, the person’s hands might look distorted, or the background might warp. In a speed-focused workflow, the team might just keep re-prompting. In a control-focused workflow, the operator would use a high-quality static image generated in Banana AI as a reference for the Veo 3 model.

Using image-to-video provides a “visual anchor.” It forces the video model to respect the geometry and lighting of the source image. Teams that skip this step often find that their video assets don’t match their still assets, creating a disjointed brand experience. Furthermore, we must reset expectations regarding motion: while current models can handle simple pans or zooms, they often struggle with complex, multi-subject interactions. If your storyboard requires a character to tie their shoes while talking on a phone, a generative video tool might not be the right instrument for that specific task today.

The Limits of Algorithmic Intent: Where Automation Hits a Wall

There is a persistent fallacy that more credits or faster models can solve a poorly defined creative brief. No matter how advanced the Banana AI infrastructure becomes, the machine possesses no “intent.” It cannot understand why a specific shade of blue represents “trust” for a financial brand, nor can it understand the cultural nuance of a specific fashion trend.

The “High Cost of the Generate Button” is ultimately the loss of human direction. When we prioritize speed, we stop being directors and start being spectators. We wait to see what the AI gives us, rather than demanding what the brand needs. This shift leads to “hallucinatory” creative—images that look beautiful but mean nothing.

A production-savvy team knows that the most valuable part of the workflow happens before the first prompt is typed. It happens in the definition of the visual style, the selection of the model (Seedream vs. Turbo), and the setting of the parameters. Without this “pilot” at the helm, the pipeline is just burning fuel.

Currently, we are in an era where AI can produce a “finished-looking” image in seconds, but a “finished” image—one that meets legal, brand, and aesthetic standards—still requires a human eye to guide the latent space. We cannot conclude that generative models will soon handle “nuanced brand voice” autonomously. The technology is a professional instrument, like a high-end camera or a synthesizer. It requires a skilled operator who values the control of the dial over the speed of the shutter.

If your team is measuring success by how many images you can produce per hour, you are likely optimizing for the wrong metric. The real win in AI creative workflows is reducing the distance between the director’s intent and the final render. That distance is bridged by control, not by velocity. Moving forward, the most competitive creative teams won’t be the ones with the fastest generation times, but the ones who have mastered the parameters to get it right in three tries instead of three hundred.

Post Comment